Testing concurrent programs is notoriously tricky — the exact sequence of actions is non-deterministic, and sometimes bugs only occur when actions end up occurring at specific times. There's various aspects of CHP that particularly warrant testing:

- The central event-synchronisation mechanism (which underlies channels, barriers, choice and conjunction).

- The surrounding communication mechanisms (i.e. the exchange of data) for channels.

- The monad, poison, and all the associated semantics.

- Support for tracing.

- Wiring combinators (especially the four-way and eight-way grid wiring).

In this post, I’ll talk about the first item: testing the central event-synchronisation mechanism. This is absolutely crucial to the correct functioning of CHP — if there’s a problem in this algorithm, it will affect any code that uses CHP. The algorithm itself is effectively a search algorithm, which looks for events that can take place. The input to the search algorithm is scattered across several Software Transactional Memory (STM) TVars (Transactional Variables) thoughout the system, and the search takes place in a single transaction.

Testing STM-Based Algorithms

The really nice thing about testing an STM-based search algorithm is that we don’t actually have to worry about the concurrent aspects at all. An STM transaction is guaranteed to be free of interference from other transactions. In our test harness, we set up all the TVars to our liking, run the search transaction and look at the results — and this is a perfectly suitable test, even if the real system will have several competing transactions running at different times.

The Search Algorithm to be Tested

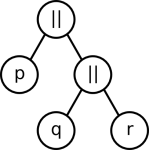

Our search algorithm looks at all of the processes and finds which events (if any) can currently synchronise. Each process makes a set of offers (or offer-set) — essentially saying that it wants to complete one of the offers. Each offer is a conjunction of events that need to be completed together. An event can complete if, and only if, the number of processes making an offer on it is equal to the event’s enroll count, and all the appropriate offers can complete. Time for an example! Let’s say we have processes p, q, and r which have the code:

p = readChannel c <&> readChannel d q = writeChannel c 0 r = writeChannel d 0 <|> (syncBarrier a <&> syncBarrier b)

Barrier “a” has an enrollment count of 1, barrier “b” has an enrollment count of 3, and the channels are all one-to-one, so they have a fixed enrollment count of 2. We say that p has an offer-set with two offers: one offer contains just “c”, the other offer contains just “d”. Process q has an offer-set with one offer, which contains just “c”. Process “r” has an offer-set with two offers: one contains just “d” and the other contains “a” and “b”. The answers to the search algorithm in this situation is that p and q can communicate together on channel c, or p and r can communicate on channel d.

Writing Individual Test Cases

So we have an example, but now we need to code that up. This involves creating events for a, b, c and d, setting their enrollment counts, recording all the offer-sets — and then running the search that we actually want to test. If we had to spell that out long-hand for every test, we would soon be bored stiff. So of course we make some helper functions to allow us to specify the test as simply and clearly as possible — in effect, we can create a testing EDSL (Embedded Domain Specific Language). Here’s my code that’s equivalent to testing our previous example:

testD "Blog example" $ do

[a,b,c,d] <- mapM evt [1,3,2,2]

p <- offer [c, d]

q <- offer [c]

r <- offer [d, a&b]

return $ (p ~> 0 & q ~> 0) `or` (p ~> 1 & r ~> 0)

The first line in the do-block creates our events with associated counts. The next lines make all the offers that we discussed. Note that the distinction between channels and barriers has vanished — that was useful for relating the example to actual CHP code, but underneath this, both channels and barriers use the event mechanism, which is what we’re actually testing here. The final line declares the possible results: either p will choose item 0 in its list of offers (that’s “c”) and q will choose item 0 (also “c”), or p will choose item 1 and r will choose item 0 (both “b”).

The nice thing here is that the test is easy to read, and corresponds very closely to what I wanted to express. Writing lots more tests is easy — which encourages me to do so, and thus my function ends up better tested. I’ve written about 25 of these tests, each aiming at testing different cases.

Implementation of my Testing EDSL

I’ll show some of the details here of how I put together my testing EDSL. The monad it uses is just a wrapper around the state monad, building up a list of events, and offers on those events:

newtype EventDSL a = EventDSL (State ([EventInfo], [CProcess]) a)

deriving (Monad)

data EventInfo = EventInfo {eventEnrolled :: Int}

deriving (Eq, Show)

type CProcess = [CEvent] -- The list of conjoined events

newtype CEvent = CEvent {cEvent :: [Int]}

Events and offers are represented simply by a wrapper around an integer (an index into the lists stored in our state) — we use newtypes to stop all the Ints getting confused with each other, and to stop accidental manipulation of them in the DSL. Our evt and offer functions are then trivial manipulations of the state:

evt :: Int -> EventDSL CEvent

evt n = EventDSL $ do (evts, x) <- get

put (evts ++ [EventInfo n], x)

return $ CEvent [length evts]

newtype COffer = COffer {cOffer :: Int} deriving (Eq)

offer :: [CEvent] -> EventDSL COffer

offer o = EventDSL $

do (x, ps) <- get

put (x, ps ++ [o])

return $ COffer (length ps)

To allow use of the ampersand operator in two different places (the input and the result), we add a type-class:

class Andable c where (&) :: c -> c -> c instance Andable CEvent where (&) (CEvent a) (CEvent b) = CEvent (a ++ b)

For the expected result, we use this data type:

data Outcome = Many [[(Int, Int)]]

Each item in the list is a different possible outcome of the test (when there are multiple results to be found, we want to allow the algorithm to pick any of them, while still passing the test). Each outcome is a list of (process index, chosen-offer index) pairs. We have some combinators for building this up:

(~>) :: COffer -> Int -> Outcome (~>) (COffer p) i = Many [[(p, i)]] instance Andable Outcome where (&) (Many [a]) (Many [b]) = Many [a++b] or :: Outcome -> Outcome -> Outcome or (Many a) (Many b) = Many (a ++ b)

Ultimately our test has type EventDSL Outcome, and we run the inner state monad, we get back: (([EventInfo], [CProcess]), Outcome). This forms the input and expected result for the test, which we feed to our helper functions (which are too long and boring to be worth showing here), which use HUnit for running the tests.

Property-based Testing

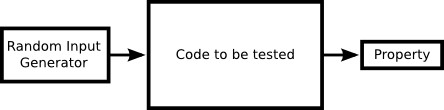

Probably Haskell’s most famous testing framework is QuickCheck (its cleverer cousin, Lazy Smallcheck, deserves to be just as well-known). This uses property-based testing: you specify a property and it generates random input, and checks that the property holds. This diagram is how I believe property-based testing is intended to work:

The size of the box is meant to indicate effort; the biggest bit of code is what you’re testing, you may need some effort to generate random input data, and then you check that some simple properties hold on the output.

So let’s think about some simple properties of our search algorithm. I can think of some:

- Any events that complete must have the same number of processes choosing them as the enroll count.

- Any events that don’t have enough processes offering definitely can’t complete.

- Processes can’t select an offer index that’s invalid (e.g. if p has 2 offers, it can’t select offer 3).

All of these properties seem to be to be pussyfooting around the main property of the search algorithm that I want to test:

- The search algorithm finds a correct answer when one is available.

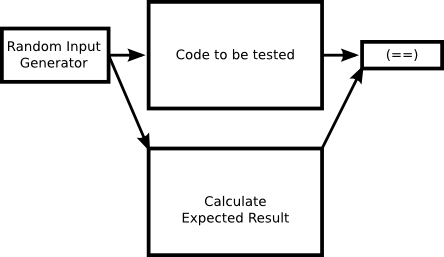

That certainly sounds simple. But how do we know what the possible correct answers to the search algorithm are? Well, we need to write a search algorithm! Often when I come to use QuickCheck, how it ends up working is this:

It feels a lot like double-entry validation; to calculate the expected result, I must code the same algorithm again! In this instance, I wrote a brute-force naive search that is checked against my optimised clever search. I’m not sure if this is still technically property-based testing, but QuickCheck is still handy here for generating the random input. (I guess a search algorithm is a bad choice for using property-based testing?) Regardless: I wrote my brute force search and tested my algorithm. It found no bugs, but now I have two sets of tests supporting me: one set of hand-crafted interesting tests, and a second version of the algorithm used with randomly generated input to pick up the cases I might have missed.

Summary

This post has been a bit light on technical detail, mainly because testing the algorithm involves a lot of dealing with the internals of my library that would take too long to explain here, and which would not be very interesting. My summary is this: constructing testing EDSLs makes your unit tests clearer to read and easier to write, which in turn encourages you to write more tests. I haven’t found property-based testing as useful as unit tests, but if you have a simple dumb solution and you want to check your optimised clever solution maintains the same behaviour, QuickCheck (or Lazy SmallCheck) is a handy way to test equality of functions.

]]>